Introduction: Beyond the Hype: Understanding the Core of AI

In an era where Artificial Intelligence (AI) frequently dominates headlines, from groundbreaking scientific discoveries to its pervasive presence in our daily lives, it’s easy to get lost in the hype. Yet, beneath the surface of sophisticated algorithms and futuristic visions lies a fascinating journey of innovation and a set of fundamental principles that govern this transformative technology. This guide aims to demystify AI, offering a comprehensive look into its foundational concepts, tracing its remarkable AI history, and exploring the diverse and impactful AI applications that are redefining industries and human potential. Far from being a mere buzzword, AI represents a profound shift in how we interact with technology and solve complex problems. Join us as we embark on an insightful exploration, moving beyond generic definitions to uncover the unique insights and fresh perspectives that truly illuminate the world of AI.

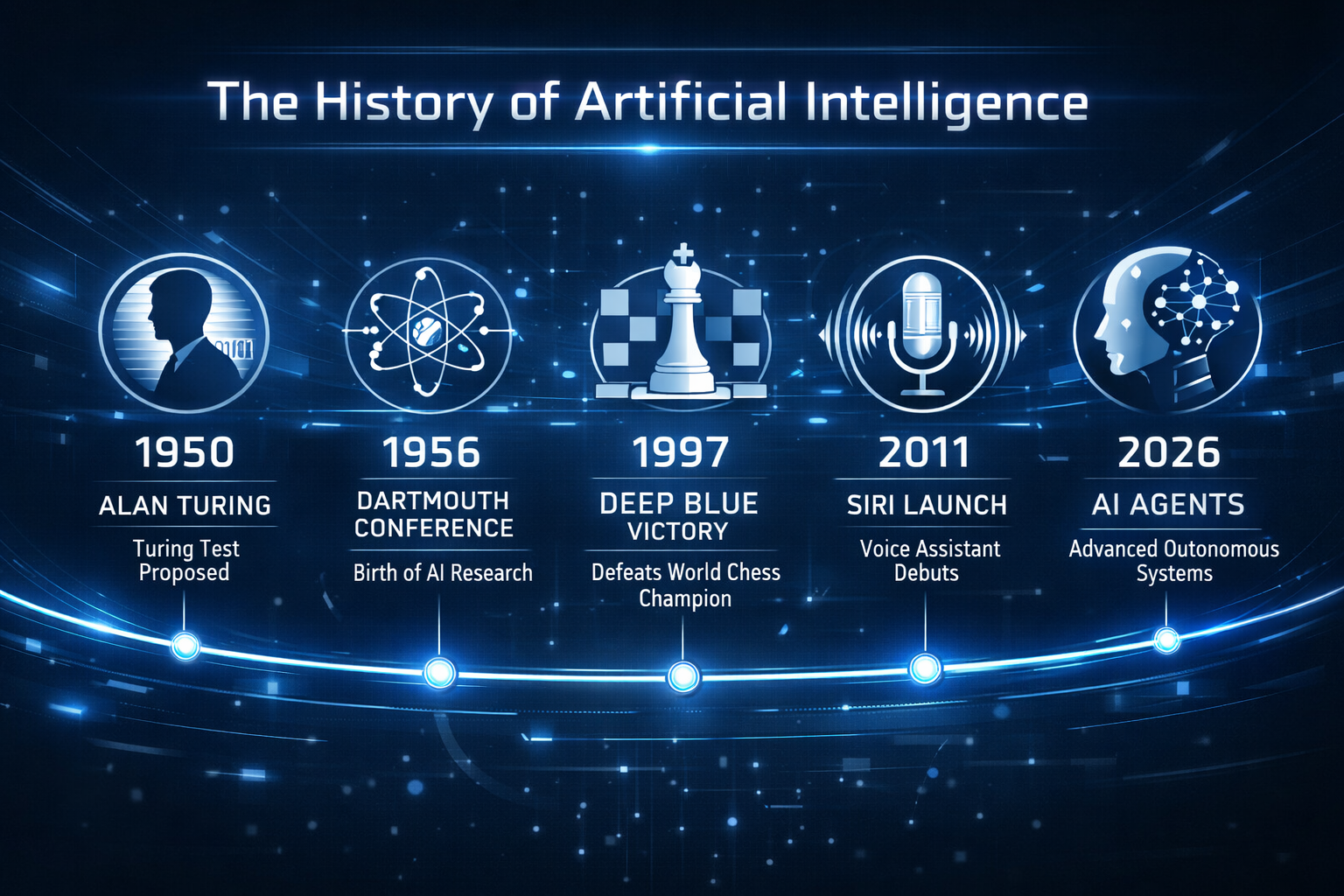

The Genesis of Intelligence: A Journey Through AI History

The story of Artificial Intelligence is not a recent phenomenon but a rich tapestry woven over decades, marked by visionary thinkers, ambitious projects, and periods of both fervent optimism and challenging setbacks. Understanding this AI history is crucial to appreciating its current capabilities and future trajectory.

Our journey begins in the mid-20th century, a period often considered the dawn of AI. The conceptual groundwork was laid by brilliant minds grappling with the very definition of intelligence and how it could be replicated in machines.

The Turing Test and the Dartmouth Conference: Birth of a Field

One cannot discuss AI history without acknowledging Alan Turing, the British mathematician and computer scientist. In 1950, Turing proposed what would become known as the “Imitation Game,” or more famously, the Turing Test . This thought experiment posited a scenario where a human interrogator would converse with both a human and a machine, aiming to determine which was which. If the interrogator could not reliably distinguish the machine from the human, the machine was said to exhibit intelligent behavior. Turing’s work provided a philosophical and practical benchmark for machine intelligence, long before the technology existed to fully realize his vision.

The term “Artificial Intelligence” itself was coined in 1956 at the seminal Dartmouth Conference . Organized by John McCarthy, a computer scientist, this summer workshop brought together leading researchers from various fields to explore the possibility of creating “thinking machines.” This gathering is widely regarded as the official birth of AI as an academic discipline, setting the stage for decades of research and development. The attendees shared a bold belief: “Every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it” .

Early Triumphs and the First AI Winter

The initial excitement generated by the Dartmouth Conference led to a period of rapid progress. The 1960s and 1970s saw the emergence of early AI programs that, while rudimentary by today’s standards, demonstrated significant potential. One notable example was ELIZA, created by MIT computer scientist Joseph Weizenbaum in 1966. ELIZA was a pioneering chatbot designed to simulate a psychotherapist, engaging users in surprisingly convincing conversations by rephrasing their statements as questions. Many users were genuinely convinced they were interacting with a human, highlighting the early power of even simple AI . Another significant development was Shakey the Robot (1966-1972), the first mobile robot to reason about its own actions, navigating environments using sensors and a TV camera .

However, this early optimism was tempered by the limitations of computing power and the complexity of real-world problems. In 1974, a critical report by applied mathematician Sir James Lighthill highlighted the over-promising and under-delivering of AI research, leading to significant funding cuts. This period, extending into the early 1990s, became known as the “AI Winter”, a time when progress slowed due to a gap between ambitious expectations and technological shortcomings .

Resurgence and the Age of Deep Learning

The AI Winter eventually thawed, driven by advancements in computational power, new algorithmic approaches, and the availability of vast datasets. The 1980s and 1990s saw renewed interest, with milestones like Ernst Dickmanns’ creation of the first self-driving car in 1986, albeit a rudimentary one . A watershed moment arrived in 1997 when IBM’s Deep Blue, a chess-playing computer program, defeated then-world chess champion Gary Kasparov. Deep Blue’s ability to evaluate 200 million chess moves per second showcased the immense processing power that machines could bring to complex problem-solving .

The 21st century ushered in an unprecedented era of AI growth, largely fueled by the rise of Machine Learning (ML) and particularly Deep Learning. The development of sophisticated neural networks, inspired by the human brain’s structure, allowed AI systems to learn from massive amounts of data with remarkable accuracy. This period saw the emergence of intelligent personal assistants like Apple’s Siri (2011) and the triumph of DeepMind’s AlphaGo over the world’s best Go player, Lee Sedol, in 2016, a feat considered far more complex than chess .

Most recently, the world has witnessed the explosion of Generative AI, with models like OpenAI’s GPT series (e.g., ChatGPT) and DALL-E captivating the public imagination. These systems can create novel content—from human-like text and code to realistic images and videos—marking a new frontier in AI capabilities . The year 2026 is even being discussed as a potential turning point for the emergence of Artificial General Intelligence (AGI) and the widespread adoption of AI agents .

This journey through AI history reveals a cyclical pattern of innovation, expectation, and refinement. Each phase has built upon the last, leading us to the sophisticated and impactful AI landscape we navigate today. The foundational concepts developed by early pioneers continue to inform the cutting-edge AI applications that are now transforming every facet of our lives.

Key Milestones in AI History

| Era | Milestone | Significance |

| 1950s | Turing Test (1950) | Established a benchmark for machine intelligence. |

| 1956 | Dartmouth Conference | Officially founded the field of Artificial Intelligence. |

| 1966 | ELIZA Chatbot | Demonstrated early potential for human-computer interaction. |

| 1974-1980s | First AI Winter | Period of reduced funding and interest due to unmet expectations. |

| 1997 | Deep Blue vs. Kasparov | Showcased AI’s superior processing power in complex games. |

| 2011 | Siri (Apple) | Brought AI-powered personal assistants to the mainstream. |

| 2016 | AlphaGo (DeepMind) | Proved AI could master highly intuitive and complex games. |

| 2022-Present | Generative AI Explosion | Models like ChatGPT and DALL-E redefined creative AI. |

| 2026 (Predicted) | AI Agents & AGI Emergence | Shift toward autonomous AI collaborators and AGI discussions. |

AI Applications: Reshaping Industries and Daily Life

From powering our smartphones to revolutionizing healthcare, AI applications are no longer confined to science fiction; they are an integral part of our present and are rapidly shaping our future. The breadth and depth of AI’s impact are truly astounding, touching nearly every sector imaginable. Let’s explore some of the most significant and emerging AI applications that are redefining how we live, work, and interact with the world.

AI in Everyday Life: The Unseen Hand

Many of us interact with AI daily without even realizing it. Consider the personalized recommendations on streaming platforms, the intelligent spam filters in our email inboxes, or the voice assistants that answer our queries. These are all powered by sophisticated AI algorithms working silently in the background. AI enhances our digital experiences, making them more intuitive, efficient, and tailored to our individual needs.

•Personalized Recommendations: AI algorithms analyze our past behavior and preferences to suggest movies, music, products, or news articles, creating a highly customized user experience.

•Natural Language Processing (NLP): This branch of AI enables machines to understand, interpret, and generate human language. It’s behind chatbots, language translation tools, and sentiment analysis, allowing for more natural human-computer interaction.

•Computer Vision: AI systems can “see” and interpret images and videos. This is used in facial recognition, object detection, and even in advanced camera features on our phones.

Transforming Industries: AI as a Catalyst for Innovation

Beyond personal convenience, AI applications are driving monumental shifts across various industries, optimizing processes, fostering innovation, and solving complex challenges that were once thought insurmountable.

Healthcare: A New Era of Diagnostics and Treatment

AI is poised to shrink the world’s health gap, moving beyond traditional diagnostics into areas like symptom triage and treatment planning . Imagine AI systems assisting doctors in identifying diseases with remarkable accuracy or personalizing treatment plans based on a patient’s unique genetic makeup and medical history. Microsoft’s AI Diagnostic Orchestrator (MAI-DxO), for instance, has demonstrated 85.5% accuracy in solving complex medical cases, significantly outperforming human averages . This isn’t about replacing human medical professionals but augmenting their capabilities, making healthcare more accessible, efficient, and precise.

Scientific Research: Accelerating Discovery

In the realm of scientific discovery, AI is becoming an indispensable partner. It’s no longer just about crunching numbers; AI is now generating hypotheses, controlling scientific experiments, and collaborating with human researchers in fields like climate modeling, molecular dynamics, and materials design . This acceleration of the research process promises breakthroughs in areas critical to humanity’s future, from new medicines to sustainable energy solutions.

Cybersecurity: Fortifying Digital Defenses

As cyber threats grow in sophistication, AI is stepping up to fortify our digital defenses. AI agents are being developed with their own identities and safeguards, acting as digital coworkers to detect and respond to threats faster than ever before . This proactive approach to security is crucial in an increasingly interconnected world, ensuring that our data and systems remain protected.

Business and Finance: Optimization and Insight

In business, AI optimizes everything from supply chain management and customer service to financial fraud detection and market analysis. AI-powered tools can process vast amounts of data to identify trends, predict market shifts, and automate routine tasks, freeing up human capital for more strategic initiatives. In finance, AI algorithms can detect fraudulent transactions in real-time, protecting consumers and institutions alike.

Transportation: The Road to Autonomy

The dream of autonomous vehicles, first envisioned with Ernst Dickmanns’ driverless car in 1986, is steadily becoming a reality through advanced AI. Self-driving cars, AI-powered traffic management systems, and optimized logistics are transforming how we move people and goods, promising safer, more efficient, and environmentally friendly transportation networks.

The Rise of AI Agents and the Future Landscape

The year 2026 is anticipated to be a pivotal moment for AI, with the proliferation of AI agents that act more like teammates than mere tools . These agents are envisioned as digital coworkers, capable of handling data crunching, content generation, and personalization, allowing human teams to focus on strategy and creativity. This shift suggests a future where human and AI collaboration becomes the norm, amplifying human potential rather than replacing it .

The ongoing advancements in generative AI, capable of creating realistic text, images, and even videos, continue to push the boundaries of what machines can achieve. Furthermore, the convergence of AI with other cutting-edge technologies, such as quantum computing, promises even more profound transformations. Hybrid computing, where quantum machines work alongside AI and supercomputers, could unlock solutions to society’s toughest challenges in materials science, medicine, and beyond .

These diverse AI applications underscore a fundamental truth: AI is not a singular technology but a vast and evolving field with the potential to redefine nearly every aspect of our existence. Understanding its fundamentals and appreciating its historical journey provides the context necessary to navigate this exciting and rapidly changing landscape.

Modern AI Applications Across Industries (2026 Trends)

| Industry | AI Application | Key Impact |

| Healthcare | Diagnostic Orchestrator (MAI-DxO) | 85.5% accuracy in solving complex medical cases . |

| Science | AI Lab Assistants | Generating hypotheses and controlling experiments in physics/biology . |

| Cybersecurity | Autonomous Security Agents | Proactive threat detection with built-in safeguards . |

| Business | AI Coworkers/Agents | Amplifying human teams to launch global campaigns in days . |

| Computing | Quantum-AI Hybrid | Breakthroughs in molecular modeling and materials science . |

| Daily Life | Personalized AI Companions | Tailored recommendations and intuitive digital experiences. |

Navigating the AI Frontier

From its theoretical inception in the mid-20th century to its current role as a transformative force across industries and daily life, Artificial Intelligence has proven to be one of humanity’s most ambitious and impactful endeavors. The journey through AI history reveals a persistent quest to imbue machines with intelligence, a quest that has overcome significant challenges and yielded astonishing breakthroughs. Today, the landscape of AI applications is vast and ever-expanding, promising a future where human ingenuity is amplified by intelligent machines.

As we stand on the cusp of new AI frontiers, including the potential emergence of Artificial General Intelligence and the widespread adoption of AI agents, it becomes increasingly vital for individuals and organizations to understand its fundamentals. This understanding is not merely academic; it is essential for navigating the ethical considerations, harnessing the immense potential, and mitigating the risks associated with this powerful technology. The future of AI is not predetermined; it is a narrative we are collectively writing, one innovation, one application, and one ethical consideration at a time.

What are your thoughts on the future of AI? How do you see AI applications impacting your industry or daily life? Share your insights in the comments below! If you’re eager to delve deeper into the world of AI, explore our other articles on machine learning and data science, or consider enrolling in a foundational AI course to further your understanding.

References

[1] Coursera. (2025, October 15). The History of AI: A Timeline of Artificial Intelligence.

[2] Microsoft Source. (2025, December 8). What’s next in AI: 7 trends to watch in 2026.